Scientists find a way to enhance the performance of quantum computers

USC (University of Southern California) scientists have demonstrated a theoretical method to enhance the performance of quantum computers, an important step to scale a technology with potential to solve some of society’s biggest challenges.

The method addresses a weakness that bedevils performance of the next-generation computers by suppressing erroneous calculations while increasing fidelity of results, a critical step before the machines can outperform classic computers as intended. Called “dynamical decoupling,” it worked on two quantum computers, proved easier and more reliable than other remedies and could be accessed via the cloud, which is a first for dynamical decoupling.

The technique administers staccato bursts of tiny, focused energy pulses to offset ambient disturbances that muck sensitive computations. The researchers report they were able to sustain a quantum state up to three times longer than would otherwise occur in an uncontrolled state.

“This is a step forward,” said Daniel Lidar, professor of electrical engineering, chemistry and physics at USC and director of the USC Center for Quantum Information Science and Technology (CQIST). “Without error suppression, there’s no way quantum computing can overtake classical computing.”

The results were published on 29 november 2018 in the journal Physical Review Letters. Lidar is the Viterbi Professor of Engineering at USC and corresponding author of the study; he led a team of researchers at CQIST, which is a collaboration between the USC Viterbi School of Engineering and the USC Dornsife School of Letters, Arts and Sciences. IBM and Bay Area startup Rigetti Computing provided cloud access to their quantum computers.

Quantum computers have the potential to make obsolete today’s supercomputers and propel breakthroughs in medicine, finance and defense capabilities. Quantum computing has the potential to optimize new drug therapies, models for climate change and designs for new machines. They can achieve faster delivery of products, lower costs for manufactured goods and more efficient transportation. They are powered by qubits, the subatomic workhorses and building blocks of quantum computing.

But qubits are prone to error and need stability to sustain computations. When they don’t operate correctly, they produce poor results, which limits their capabilities relative to traditional computers. Scientists worldwide have yet to achieve a “quantum advantage” — the point where a quantum computer outperforms a conventional computer on any task.

The problem is “noise,” a catch-all descriptor for perturbations such as sound, temperature and vibration. It can destabilize qubits, which creates “decoherence,” an upset that disrupts the duration of the quantum state, which reduces time a quantum computer can perform a task while achieving accurate results.

“Noise and decoherence have a large impact and ruin computations, and a quantum computer with too much noise is useless,” Lidar explained. “But if you can knock down the problems associated with noise, then you start to approach the point where quantum computers become more useful than classic computers.”

USC is the only university in the world with a quantum computer; its 1098-qubit D-Wave quantum annealer specializes in solving optimization problems. Part of the USC-Lockheed Martin Center for Quantum Computing, it’s located at USC’s Information Sciences Institute. However, the latest research findings were achieved not on the D-Wave machine, but on smaller scale, general-purpose quantum computers: IBM’s 16-qubit QX5 and Rigetti’s 19-qubit Acorn.

To achieve dynamical decoupling (DD), the researchers bathed the superconducting qubits with tightly focused, timed pulses of minute electromagnetic energy. By manipulating the pulses, scientists were able to envelop the qubits in a microenvironment, sequestered — or decoupled — from surrounding ambient noise, thus perpetuating a quantum state.

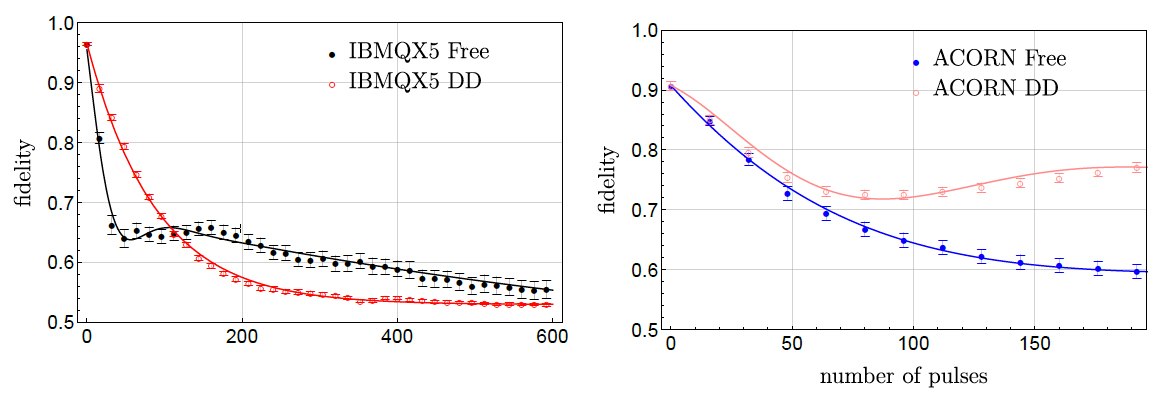

Mean fidelity as a function of number of pulses

“We tried a simple mechanism to reduce error in the machines that turned out to be effective,” said Bibek Pokharel, an electrical engineering doctoral student at USC Viterbi and first author of the study.

The time sequences for the experiments were exceedingly small with up to 200 pulses spanning up to 600 nanoseconds. One-billionth of a second, or a nanosecond, is how long it takes for light to travel one foot.

For the IBM quantum computers, final fidelity improved threefold, from 28.9 percent to 88.4 percent. For the Rigetti quantum computer, final fidelity improvement was a more modest 17 percent, from 59.8 to 77.1, according to the study. The scientists tested how long fidelity improvement could be sustained and found that more pulses always improved matters for the Rigetti computer, while there was a limit of about 100 pulses for the IBM computer.

Overall, the findings show the DD method works better than other quantum error correction methods that have been attempted so far, Lidar said.

“To the best of our knowledge,” the researchers wrote, “this amounts to the first unequivocal demonstration of successful decoherence mitigation in cloud-based superconducting qubit platforms … we expect that the lessons drawn will have wide applicability.”